CASE STUDY

Modernizing Private Credit Infrastructure Through Governed Tokenization

AI

Product Strategy

Web3

TOKENIZED FINANCIAL MARKETS

Web3 Product and Strategy Lead

Global Financial Institution evaluating tokenization to modernize private credit origination and servicing across corporate and infrastructure portfolios. Leadership required improved lifecycle transparency and monitoring discipline without weakening capital governance or regulatory posture.

I led the design of a governance-centered operating model that aligned legal, compliance, treasury, credit risk, and technology stakeholders around eligibility thresholds, capital authorization rules, escalation triggers, and board-level reporting architecture. The work focused on institutional control design, not token mechanics.

Challenge

Private credit operations relied on fragmented underwriting documentation, manual covenant tracking, opaque servicing workflows, and reconciliation-heavy reporting processes across multi-jurisdictional portfolios.

These limitations introduced operational friction, delayed decision-making, and reduced transparency into asset performance and risk exposure.

Tokenization presented an opportunity to modernize these workflows through improved data synchronization, lifecycle visibility, and programmable asset structures. However, existing institutional infrastructure and governance models were not designed to support tokenized financial assets operating across legal, operational, and regulatory boundaries.

This created a structural gap between legacy credit operations and emerging digital asset infrastructure. Leadership lacked a structured way to determine how tokenization could be introduced without disrupting servicing models, weakening control frameworks, or creating regulatory exposure.

The opportunity was to design a governance-first tokenization strategy that modernized private credit infrastructure while preserving institutional control, compliance integrity, and operational continuity.

Key Drivers

- Fragmented underwriting, servicing, and reporting workflows across private credit portfolios

- Limited transparency into asset performance, covenant status, and lifecycle events

- Operational inefficiencies driven by reconciliation-heavy processes

- Increasing market interest in tokenized financial assets and programmable infrastructure

- Regulatory and governance complexity across multi-jurisdictional credit portfolios

- Need to modernize infrastructure without disrupting existing operational and control models

My Role

I served as Web3 Product and Strategy Lead, accountable for defining the institutional tokenization operating model and aligning cross-functional governance stakeholders.

I structured asset eligibility thresholds, formalized capital gating criteria, defined escalation triggers, embedded lifecycle control checkpoints, and translated modernization objectives into board-ready decision frameworks. I preserved regulatory alignment while enabling controlled pilot authorization.

Scope

- Executive alignment across risk, treasury, compliance, and technology

- Asset eligibility and counterparty restriction framework

- Regulatory and capital gating architecture

- Lifecycle workflow redesign under control nodes

- AI monitoring instrumentation modeling

- Executive and board reporting architecture

Approach & Methodology

Approach

- Systems-first transformation design

- Governance discipline before automation

- Capital authorization before feature expansion

- Phased deployment under exposure caps

- Human accountability over autonomous enforcement

Methodology

- Cross-functional working sessions across legal, risk, and treasury

- Regulatory classification scenario modeling

- Lifecycle governance mapping workshops

- Exposure concentration simulation modeling

- Escalation trigger definition and threshold testing

- Executive oversight cadence design

- AI monitoring signal modeling

Solution

Solution

The solution was a governance-first tokenization system structured around lifecycle visibility, operational alignment, control frameworks, and phased infrastructure integration. These components defined how tokenized assets could be introduced into private credit operations while preserving institutional controls, compliance integrity, and servicing continuity.

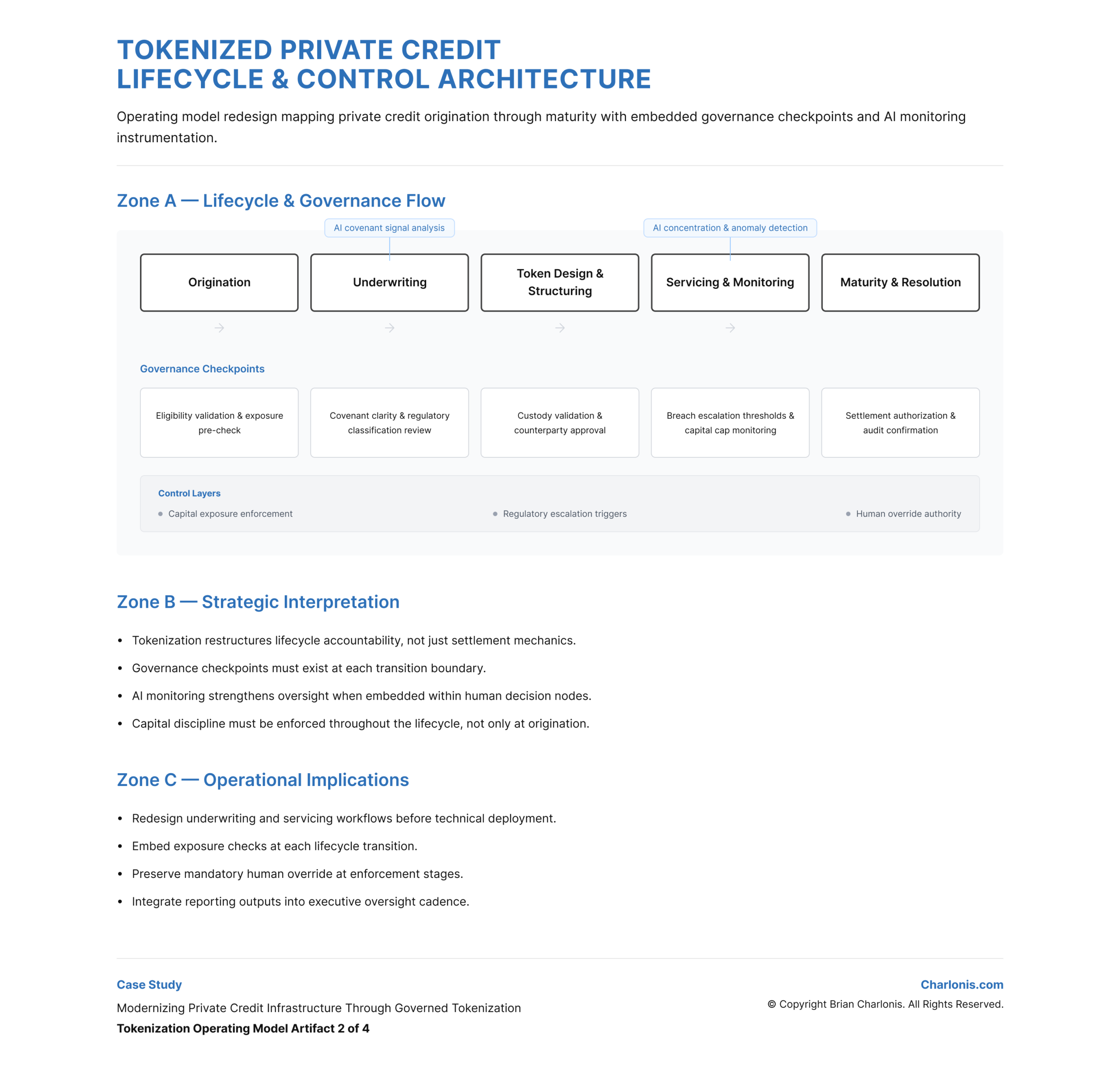

Lifecycle Visibility & Data Synchronization

Defined mechanisms for tracking asset state, performance, and covenant status across the credit lifecycle.

This improved transparency into asset performance and reduced reliance on reconciliation-heavy reporting processes.

This artifact defines how asset performance and lifecycle events are monitored.

View Figma Prototype:

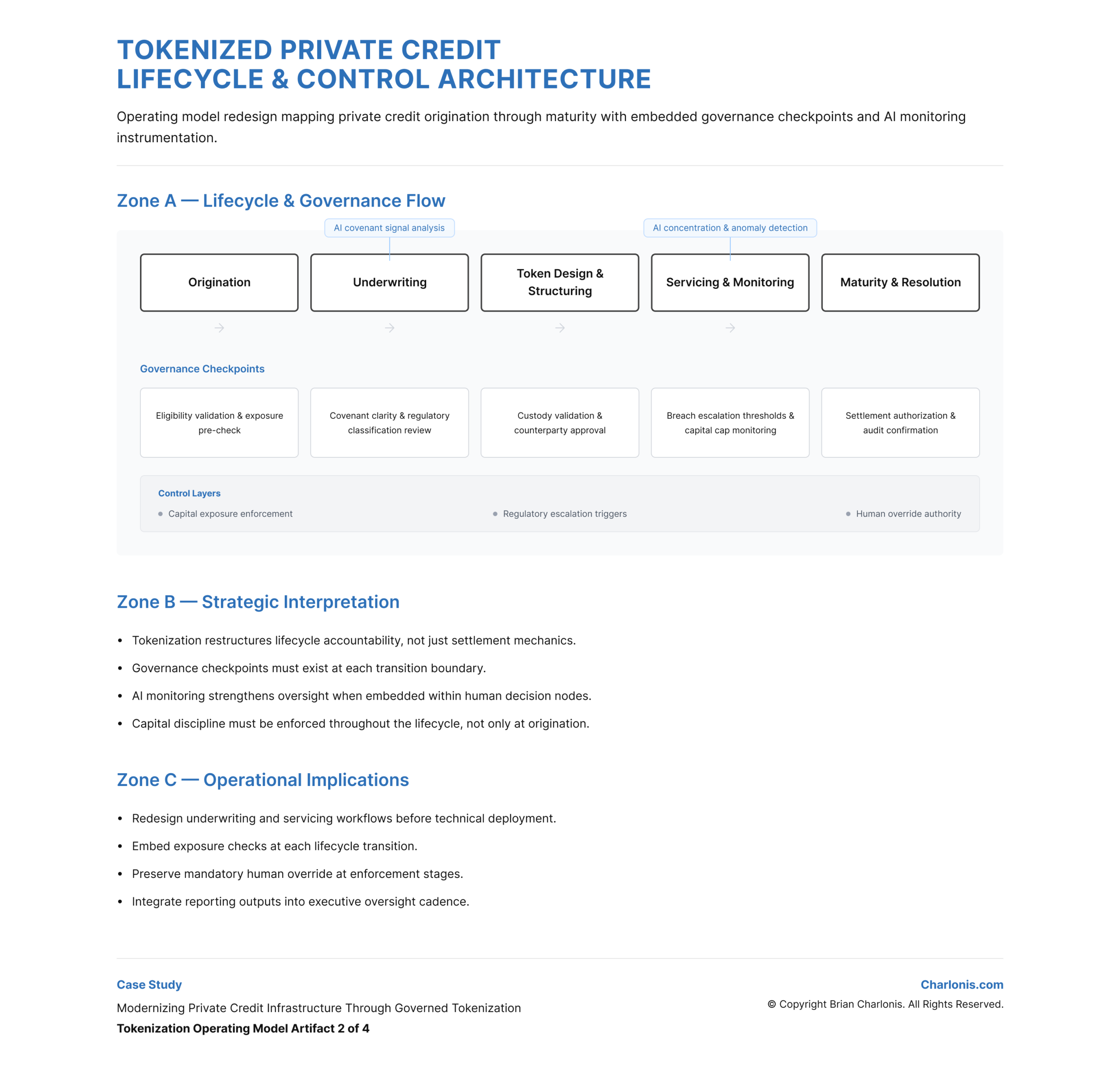

TOKENIZED PRIVATE CREDIT LIFECYCLE & CONTROL ARCHITECTURE

Operational Workflow Integration

Aligned tokenized asset structures with existing underwriting, servicing, and reporting workflows.

This ensured that tokenization enhanced existing operations without disrupting institutional processes or control points.

This artifact defines how tokenization integrates into private credit operations.

View Figma Prototype:

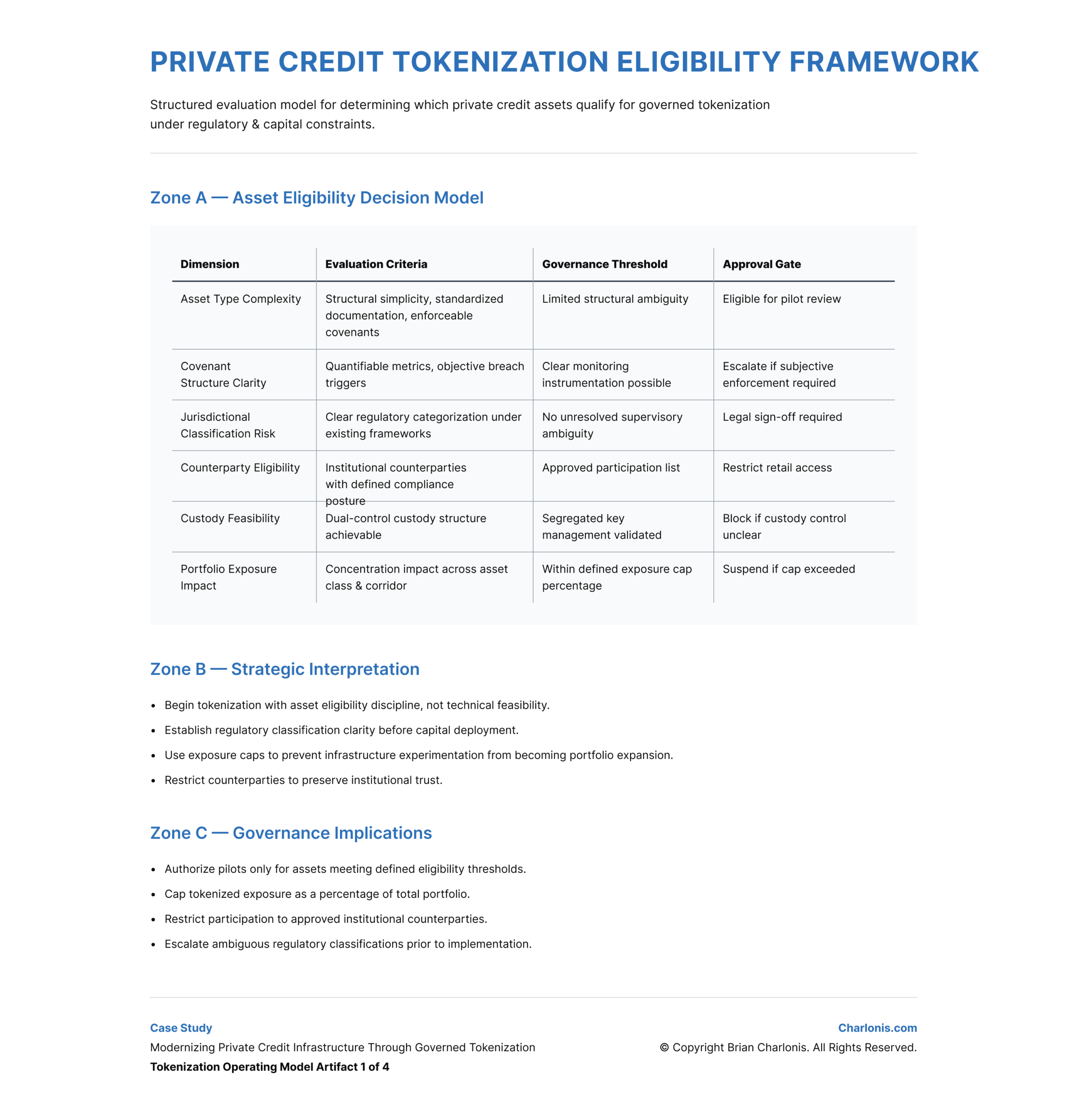

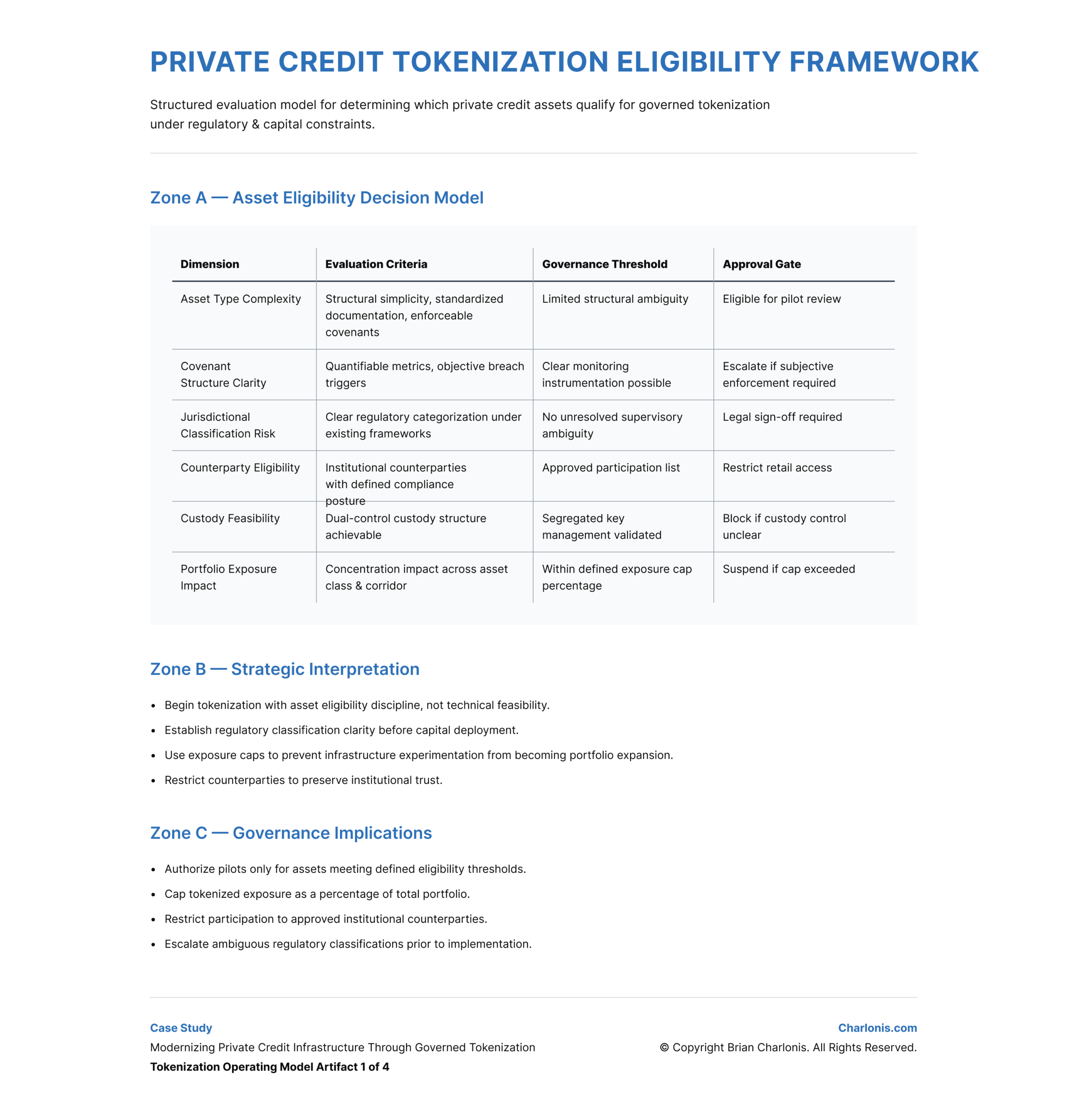

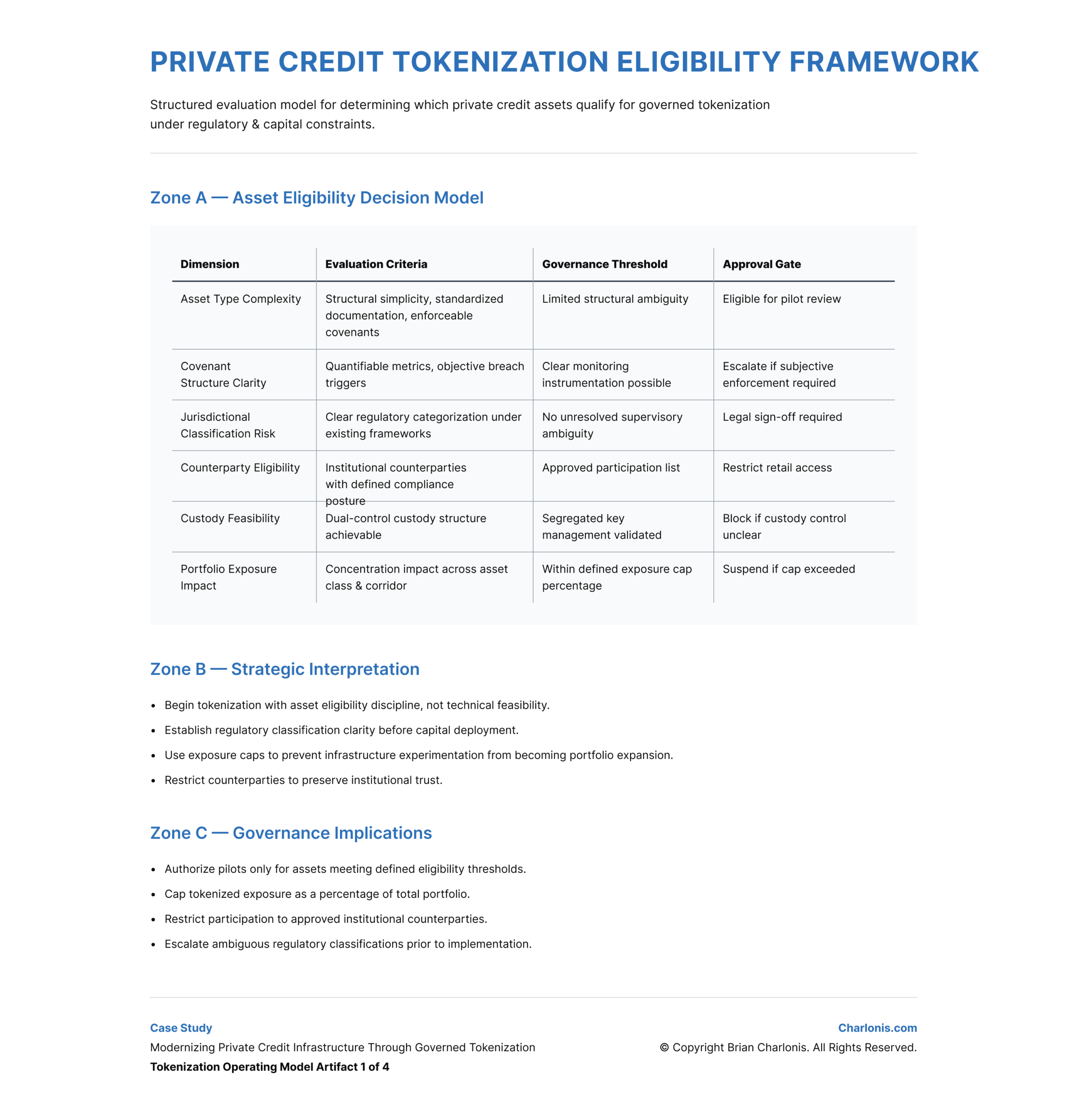

PRIVATE CREDIT TOKENIZATION ELIGIBILITY FRAMEWORK

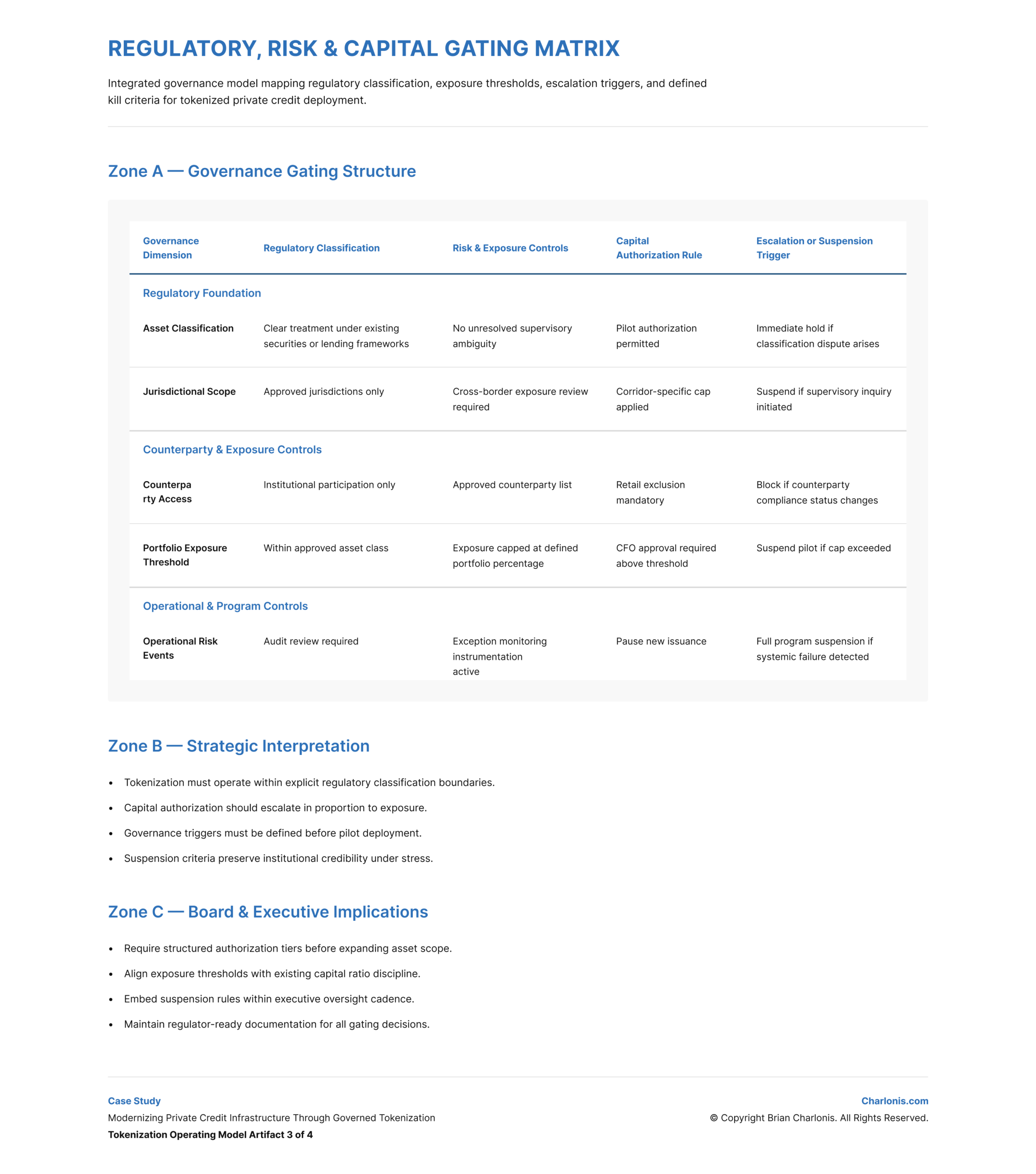

Regulatory & Capital Gating

Integrated regulatory classification and capital exposure controls into a unified governance matrix.

- Exposure caps as percentage of total portfolio

- Escalation triggers tied to defined breach thresholds

- Jurisdiction-specific authorization requirements

- Suspension criteria if supervisory ambiguity or systemic risk emerged

View Figma Prototype:

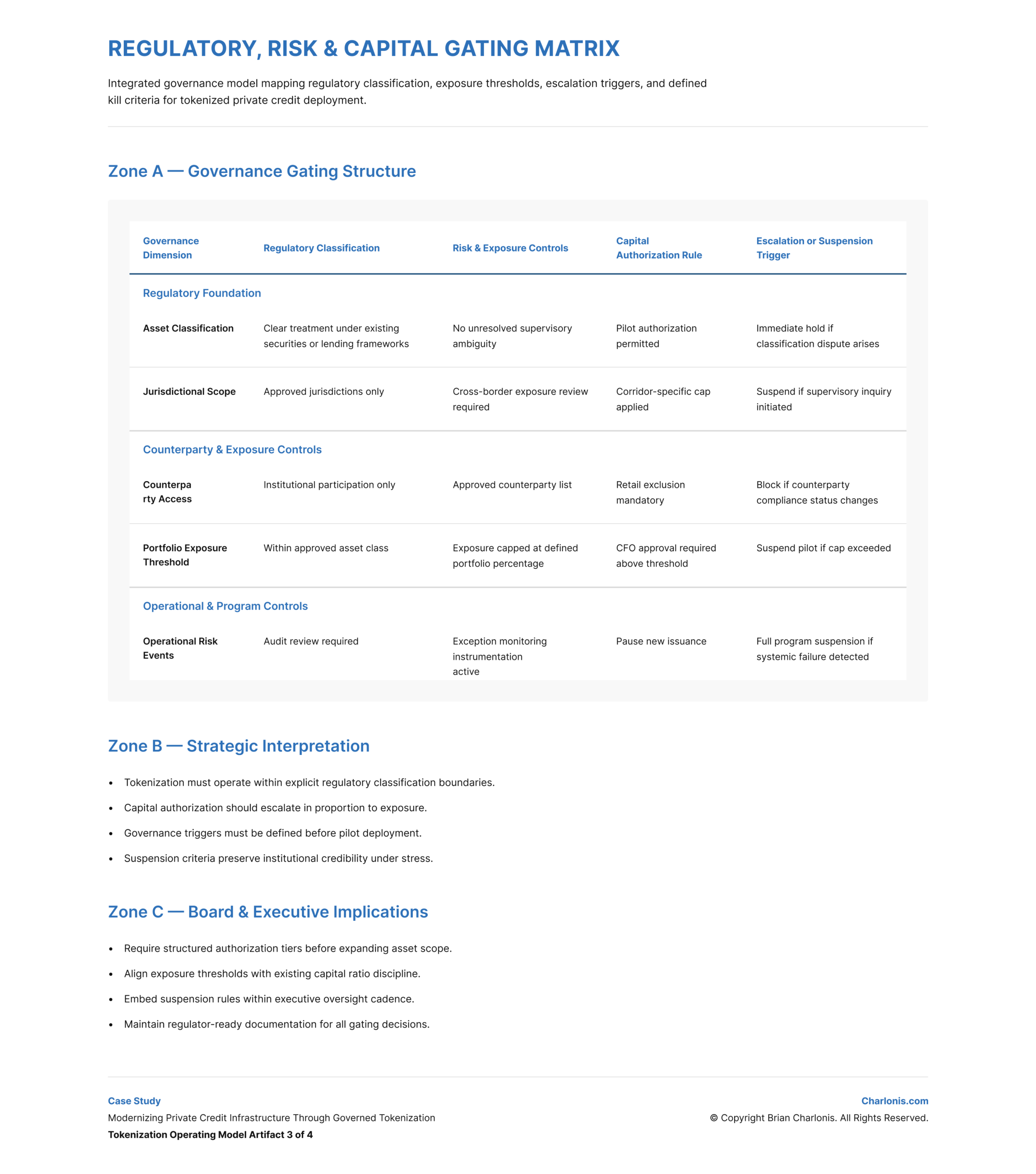

REGULATORY, RISK & CAPITAL GATING MATRIX

AI Monitoring as Instrumentation

Embedded AI as a governed monitoring layer to detect covenant deviation signals, exposure concentration anomalies, and reporting irregularities. AI supported oversight dashboards and executive reporting. Enforcement authority remained human-controlled.

View Figma Prototype:

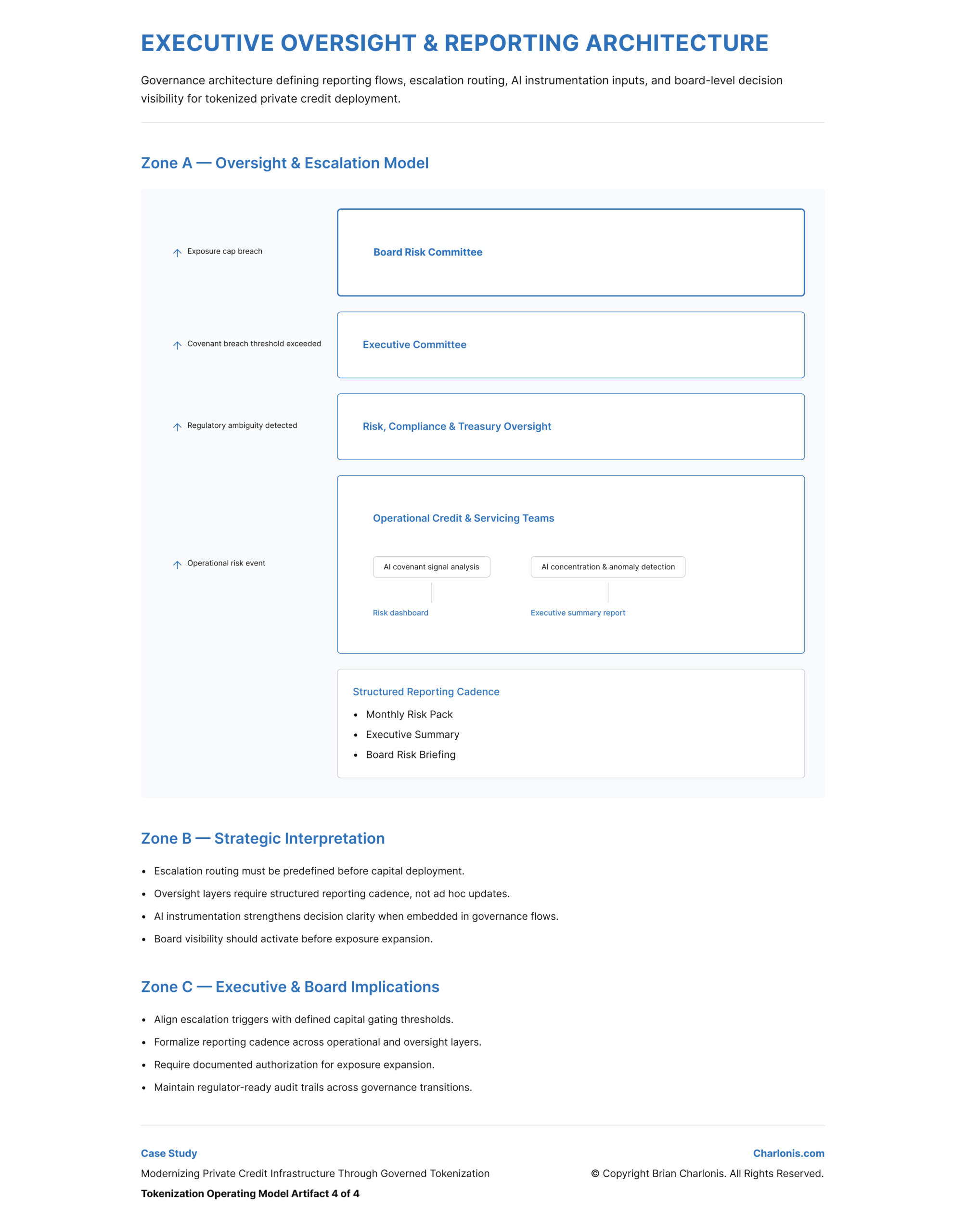

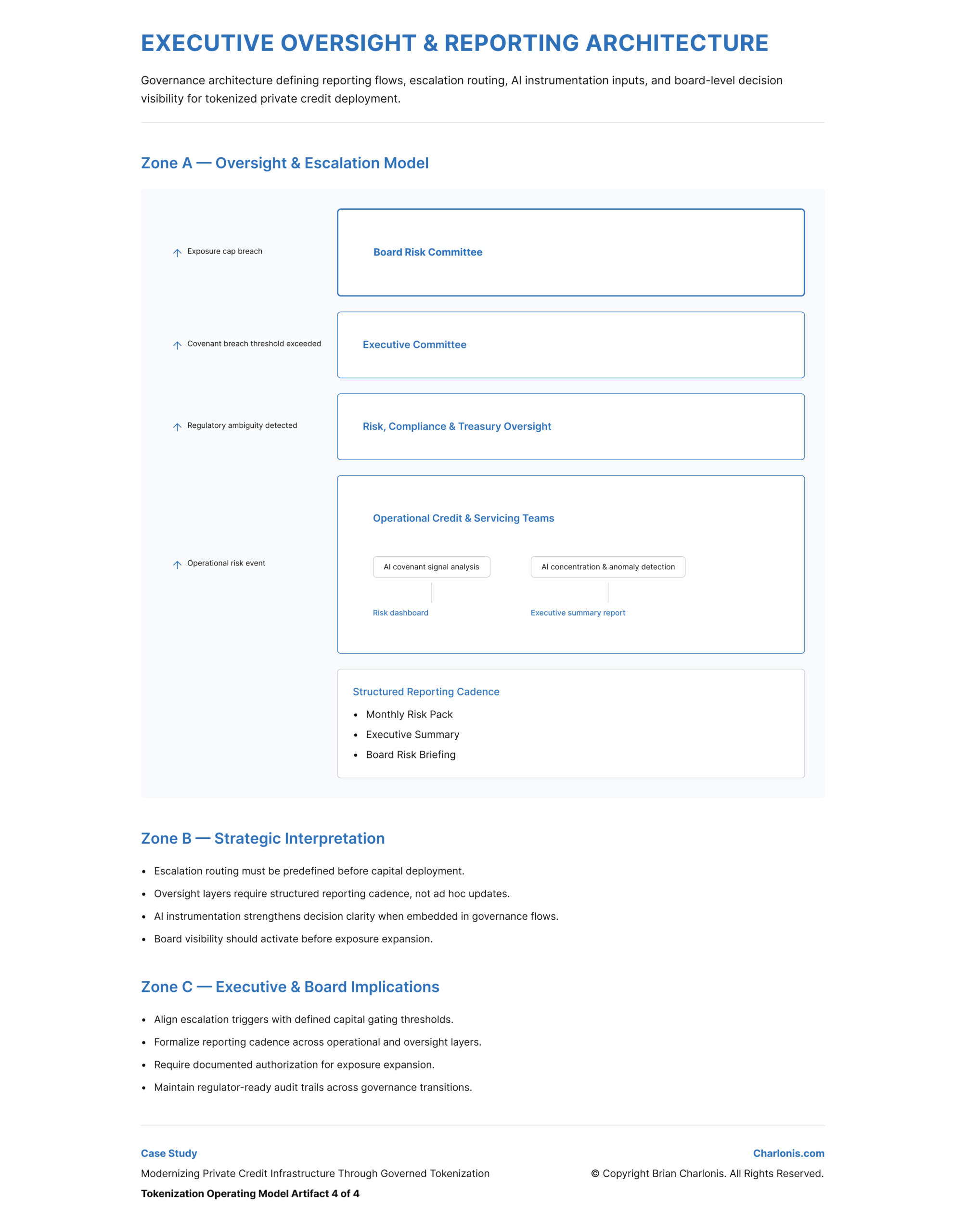

EXECUTIVE OVERSIGHT & REPORTING ARCHITECTURE

Enterprise & Experience Implication

- Tokenization changes how financial assets are structured, tracked, and serviced.

- When aligned with institutional workflows and controls, it improves transparency and operational efficiency.

- Without integration and governance, it introduces fragmentation and operational risk.

Tradeoffs & Decisions

The solution was a governance-first tokenization system structured around lifecycle visibility, operational alignment, control frameworks, and phased infrastructure integration. These components defined how tokenized assets could be introduced into private credit operations while preserving institutional controls, compliance integrity, and servicing continuity.

Prioritized operational continuity and control over rapid infrastructure replacement.

Lifecycle Visibility & Data Synchronization

Defined mechanisms for tracking asset state, performance, and covenant status across the credit lifecycle.

This improved transparency into asset performance and reduced reliance on reconciliation-heavy reporting processes.

This artifact defines how asset performance and lifecycle events are monitored.

View Figma Prototype:

Operational Workflow Integration

Aligned tokenized asset structures with existing underwriting, servicing, and reporting workflows.

This ensured that tokenization enhanced existing operations without disrupting institutional processes or control points.

This artifact defines how tokenization integrates into private credit operations.

View Figma Prototype:

Regulatory & Capital Gating

Integrated regulatory classification and capital exposure controls into a unified governance matrix.

- Exposure caps as a percentage of total portfolio

- Escalation triggers tied to defined breach thresholds

- Jurisdiction-specific authorization requirements

- Suspension criteria triggered by supervisory ambiguity or systemic risk

This artifact defines how tokenized exposure is controlled within institutional risk and regulatory constraints.

View Figma Prototype:

AI Monitoring as Instrumentation

Defined AI as a governed monitoring layer detecting covenant deviation signals, exposure concentration anomalies, and reporting irregularities.

AI supported oversight dashboards and executive reporting, while enforcement authority remained human-controlled.

This artifact defines how tokenized asset performance and risk signals are monitored and escalated.

View Figma Prototype:

Enterprise & Experience Implication

- Tokenization changes how private credit assets are structured, tracked, and serviced across their lifecycle.

- When aligned with institutional workflows and governance controls, it improves transparency, reduces operational friction, and enhances decision visibility.

- Without integration and governance, tokenization introduces fragmentation, operational risk, and inconsistent asset management.

Tradeoffs & Decisions

- Prioritized operational continuity, governance alignment, and regulatory feasibility over rapid infrastructure transformation.

- This ensured controlled adoption and reduced institutional risk, while limiting the speed of innovation and delaying full realization of tokenization benefits.

- The approach improved lifecycle visibility and decision clarity, while requiring phased implementation and ongoing governance oversight.

Outcomes

Impact Summary

Institutionalized governance-first tokenization framework

Formalized capital discipline before infrastructure deployment

Embedded AI monitoring without weakening accountability

Strengthened executive and board oversight clarity

Success Metrics

- Reduced modeled reconciliation workload across servicing workflows

- Improved covenant monitoring latency in pilot simulations

- Reduced manual audit preparation burden

- Increased lifecycle transparency across multi-jurisdiction portfolios

Signals Monitored

- Exposure concentration by asset type and jurisdiction

- Covenant deviation frequency

- Reporting timeliness

- Operational exception rates

- Escalation trigger activation frequency

Decision Thresholds

- Automatic escalation above defined breach thresholds

- Human authorization required for covenant enforcement

- Pilot suspension if exposure cap exceeded

- Regulatory ambiguity triggered hold state pending review

Actions Taken

- Authorized limited-scope pilot under defined capital cap

- Implemented dual-control custody validation

- Deferred secondary liquidity expansion pending supervisory alignment

- Institutionalized structured executive reporting cadence

Artifacts

Private Credit Tokenization Eligibility Framework

- Defined structured asset inclusion criteria, jurisdictional boundaries, and exposure thresholds.

- Served executive committee, treasury, and risk leadership.

- Enabled disciplined pilot authorization and prevented capital drift.

Tokenized Private Credit Lifecycle & Control Architecture

- Mapped origination through maturity with embedded governance checkpoints and AI instrumentation.

- Served operations, compliance, and technology teams.

- Clarified automation boundaries and preserved mandatory human oversight.

Regulatory, Risk & Capital Gating Matrix

- Integrated asset classification, capital authorization rules, escalation triggers, and suspension criteria.

- Served legal, treasury, and board risk committee.

- Formalized institutional experimentation under defined supervisory guardrails.

Executive Oversight & Reporting Architecture

- Defined escalation routing, monitored signals, reporting cadence, and board visibility structure.

- Served executive leadership and risk oversight functions.

- Standardized accountability across modernization phases.

Key Takeaways

Tokenization must begin with eligibility discipline, not infrastructure selection.

Capital gating protects institutional credibility during experimentation.

Lifecycle redesign determines governance strength more than smart contract logic.

AI strengthens oversight when positioned as monitored instrumentation.

Escalation and suspension criteria must be defined before pilot capital is authorized.

Reflection

What I Would Do Differently

- Initiate supervisory dialogue earlier in pilot structuring

- Conduct cross-border enforcement scenario simulations prior to asset selection

- Formalize investor disclosure standards before lifecycle redesign

AI Opportunities

- Portfolio-level anomaly clustering for early covenant risk detection

- Predictive exposure concentration modeling using structured simulation

- Automated compliance reporting assembly with validation checkpoints

Supporting AI Professional Specializations

University of Pennsylvania

IBM

Vanderbilt University

Web3 Opportunities

- Controlled exploration of institutional secondary transfer models

- Programmable escrow aligned with regulated custody frameworks

- Cross-institution interoperability standards under supervisory coordination

Supporting Web3 Professional Specializations

Duke University

INSEAD

University of Pennsylvania

Recommended

If you liked this case study, you may also be interested in these…

CASE STUDY

SETTLEMENT INFRASTRUCTURE

Designing a Capital-Efficient Cross-Border Settlement Strategy Using XRPL

Created an XRPL use case analysis including architecture diagrams, payment flows, and liquidity scenarios.

Product Strategy

Web3

CASE STUDY

OPERATIONAL AI GOVERNANCE

Human-in-the-Loop Governance for AI Decision Systems

Designed a threshold-governed AI decision system integrating simulation modeling, escalation controls, executive oversight dashboards, and enterprise accountability architecture.

AI

Product Strategy

CASE STUDY

TOKENIZED FINANCIAL MARKETS

Modernizing Private Credit Infrastructure Through Governed Tokenization

Designed a governance-first tokenization operating model that formalized asset eligibility, capital gating, escalation routing, and executive oversight before pilot capital deployment.

AI

Product Strategy

Web3

CASE STUDY

GOVERNANCE & COMPLIANCE

Designing Programmable Compliance Infrastructure Using Smart Contracts

Defined a governance-centered programmable compliance architecture enabling institutional evaluation, auditability, and deployment readiness of smart contract–based financial agreement enforcement.

Product Strategy

Web3

Modernization Requires Governance Discipline.

If you are leading digital transformation, AI governance, or infrastructure redesign in regulated environments, let’s connect on LinkedIn.