CASE STUDY

Human-in-the-Loop Governance for AI Decision Systems

AI

CX

Product Strategy

OPERATIONAL AI GOVERNANCE

AI Strategy Lead

A Financial Services Organization needed to introduce AI into decision-making workflows without losing control, auditability, or user trust. Existing approaches treated AI outputs as either fully automated or fully manual, with no structured model for balancing speed and oversight.

I designed a human-in-the-loop decision governance system that defined how AI-driven decisions are evaluated, when human intervention is required, and how outcomes are monitored and adjusted over time. The work focused on structuring decision behavior, not just model performance.

Challenge

AI systems were being introduced into operational workflows without clear rules for when decisions should be automated versus reviewed by humans. This created inconsistent behavior, unclear accountability, and increased risk in regulated environments.

Teams lacked a structured approach to defining confidence thresholds, escalation triggers, and monitoring mechanisms, resulting in either over-reliance on automation or inefficient manual review processes.

The opportunity was to design a decision system that balanced automation with human oversight, ensuring AI could improve efficiency while maintaining control, transparency, and trust.

Key Drivers

- Decision latency across global credit teams

- Inconsistent override documentation

- Lack of structured escalation triggers

- Regulatory accountability risk

- Absence of quantified tolerance thresholds

- Limited executive visibility into AI performance

My Role

I led the design of the human-in-the-loop decision governance model, working across risk, compliance, operations, and product teams to define how AI decisions should be evaluated, escalated, and monitored.

My role focused on translating model behavior into operational decision rules, ensuring thresholds, escalation logic, and review processes aligned with regulatory expectations and business objectives.

I facilitated alignment across stakeholders to move from ad hoc decision handling to a structured, repeatable decision system.

Scope

- Governance architecture design

- Confidence threshold definition

- Escalation control framework

- Synthetic impact simulation modeling

- Executive dashboard definition

- AI operating model design

- Risk committee alignment

Approach & Methodology

Approach

- Systems-first governance design

- Threshold-driven decision architecture

- Human accountability embedded at high-risk tiers

- Simulation before scale

- Closed-loop oversight instrumentation

Methodology

- Risk-tier segmentation modeling

- Confidence band definition at 80% and 92% thresholds

- 15% regional override tolerance modeling

- Synthetic scenario simulation across conservative, balanced, and aggressive strategies

- Escalation matrix policy design

- Executive oversight dashboard modeling

- Operating model mapping across governance layers

Solution

The solution was a human-in-the-loop decision system structured around confidence thresholds, escalation logic, monitoring frameworks, and feedback loops. These components defined how AI decisions are executed, when human intervention is required, and how system performance evolves over time.

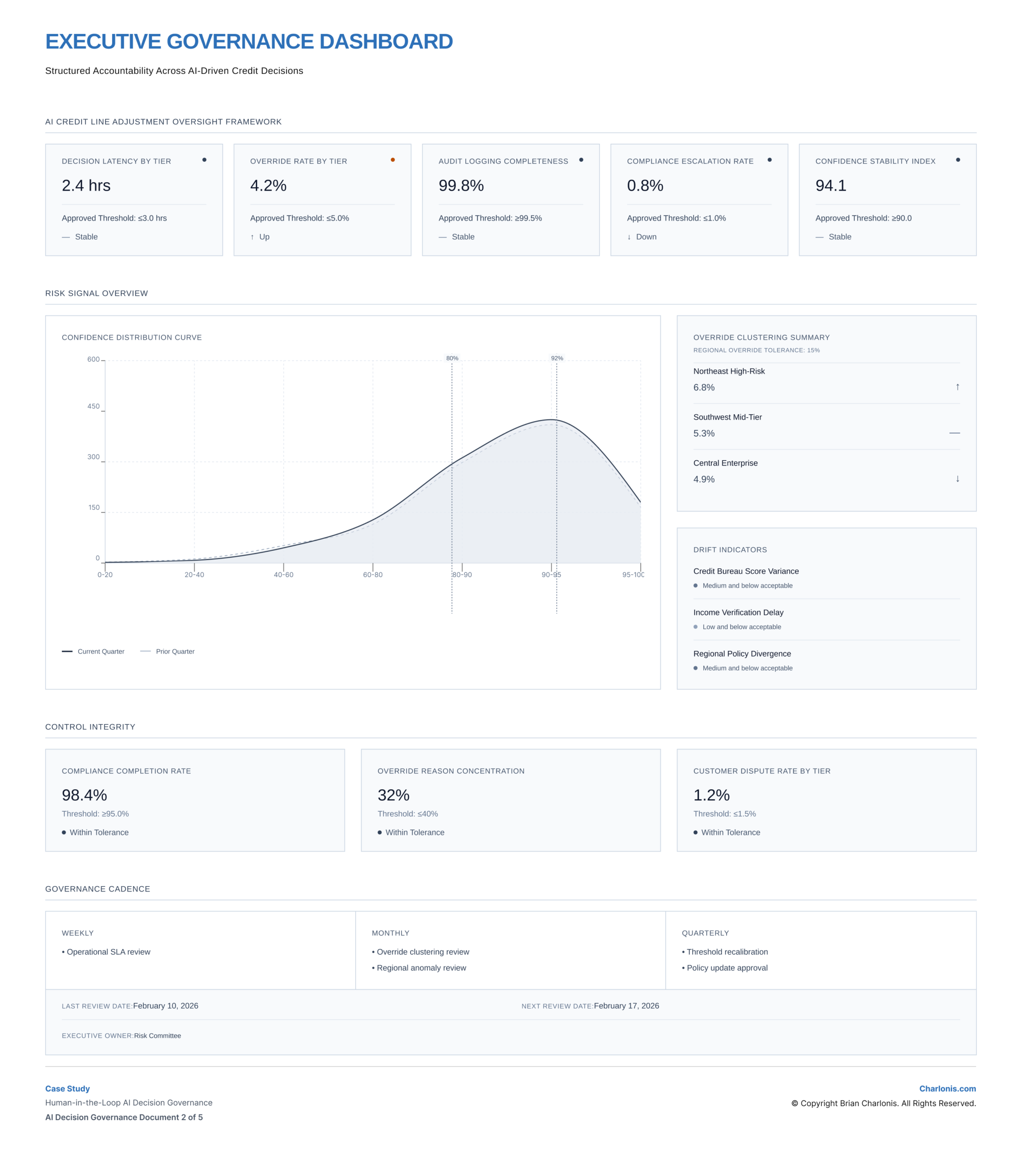

Human-in-the-Loop Governance Blueprint

Defined structured confidence bands to govern decision behavior:

- Low Risk > 92%

- Medium Risk 80% – 92%

- High Risk < 80%

Each tier determined how decisions were executed, reviewed, or escalated.

Escalation intensity, compliance checkpoints, and a 15% regional override tolerance threshold were embedded as explicit governance controls.

Automation degraded into human review at defined thresholds, ensuring higher-risk decisions remained subject to oversight while preserving efficiency at lower-risk levels.

View Figma Prototype:

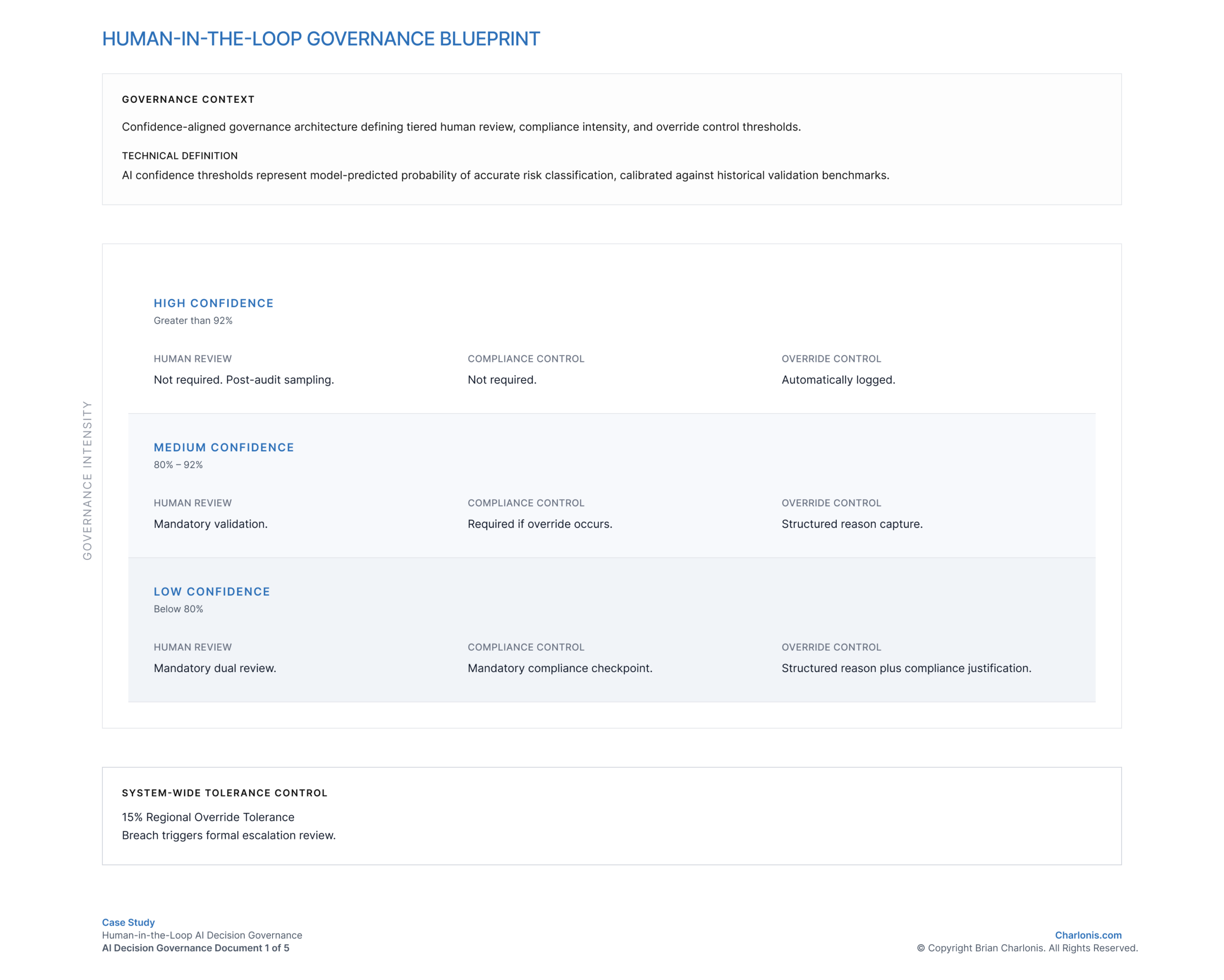

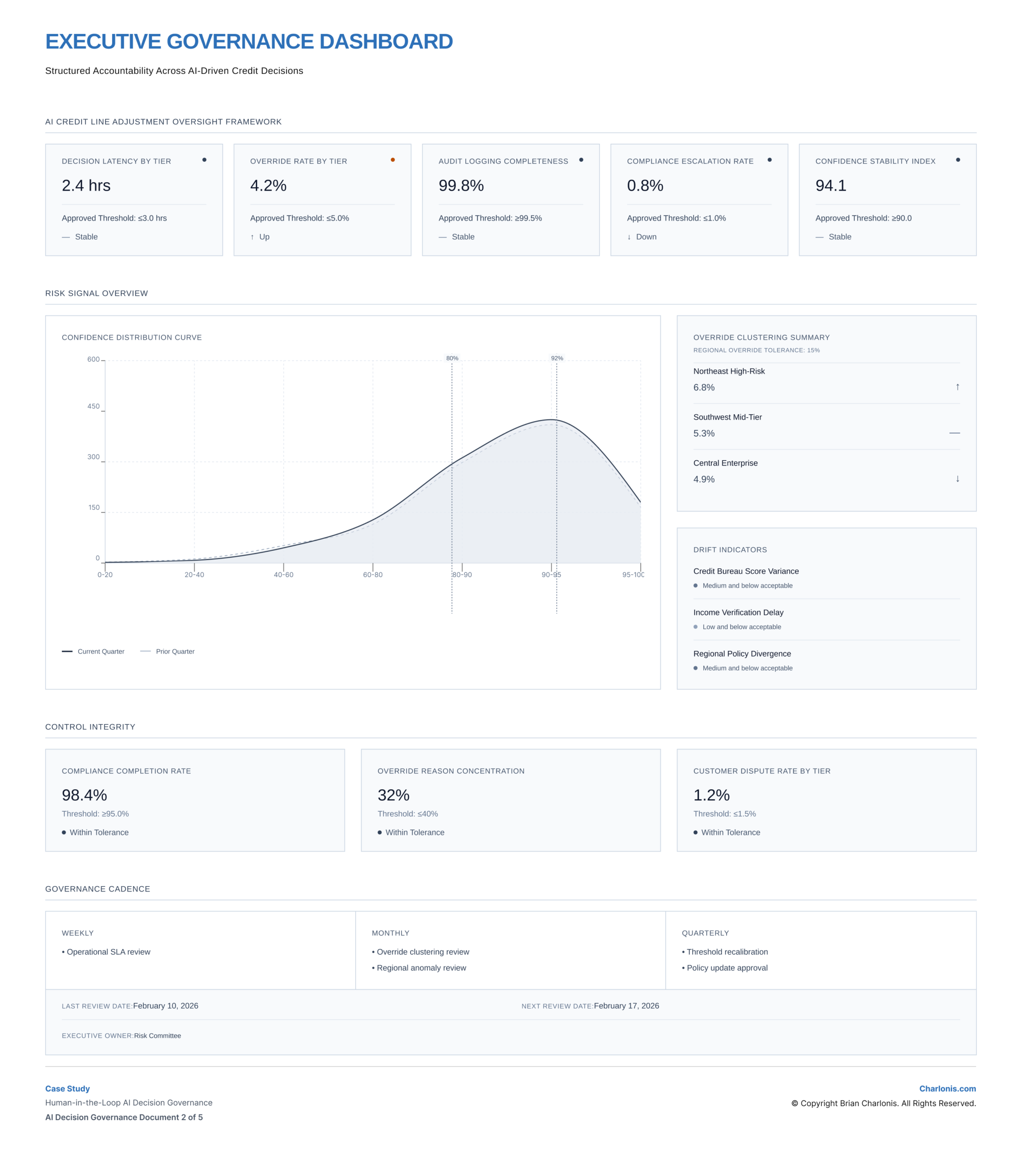

Executive Governance Dashboard

Designed a board-level oversight system tracking:

- Decision latency by tier

- Override rate vs 15% tolerance

- Audit logging completeness

- Confidence distribution stability

- Drift indicators

Confidence thresholds at 80% and 92% were directly embedded into monitoring, aligning decision logic with governance visibility.

Governance cadence was structured across weekly, monthly, and quarterly review cycles to support continuous oversight and recalibration.

View Figma Prototype:

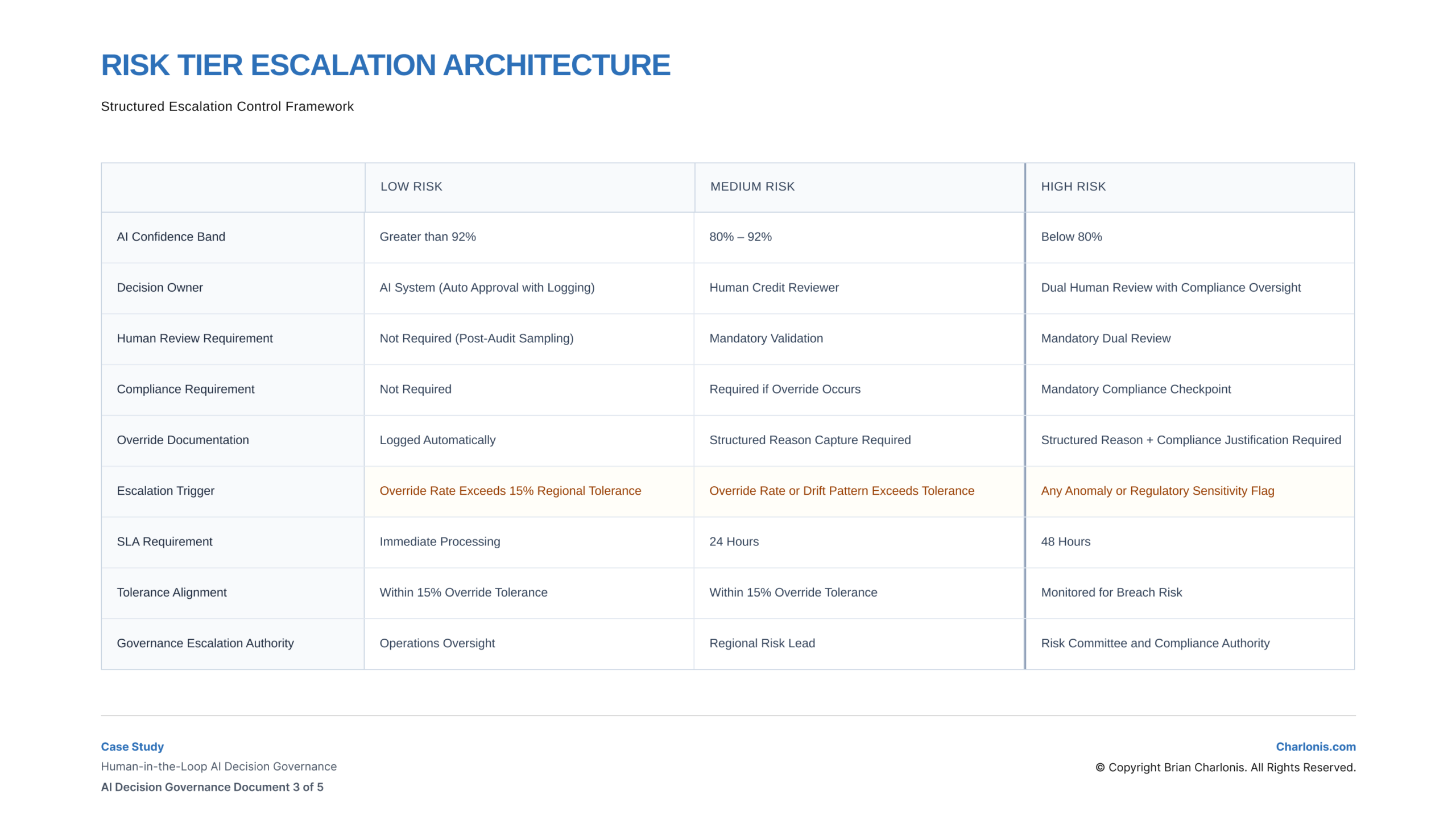

Risk Tier Escalation Architecture

Established a structured escalation model defining:

- Decision ownership by tier

- SLA requirements (Immediate, 24h, 48h)

- Compliance triggers

- Override documentation standards

- Escalation authority up to Risk Committee

Escalation triggers were explicitly defined, including breach of the 15% override tolerance and detection of drift patterns.

This ensured consistent handling of edge cases and clear accountability across decision layers.

View Figma Prototype:

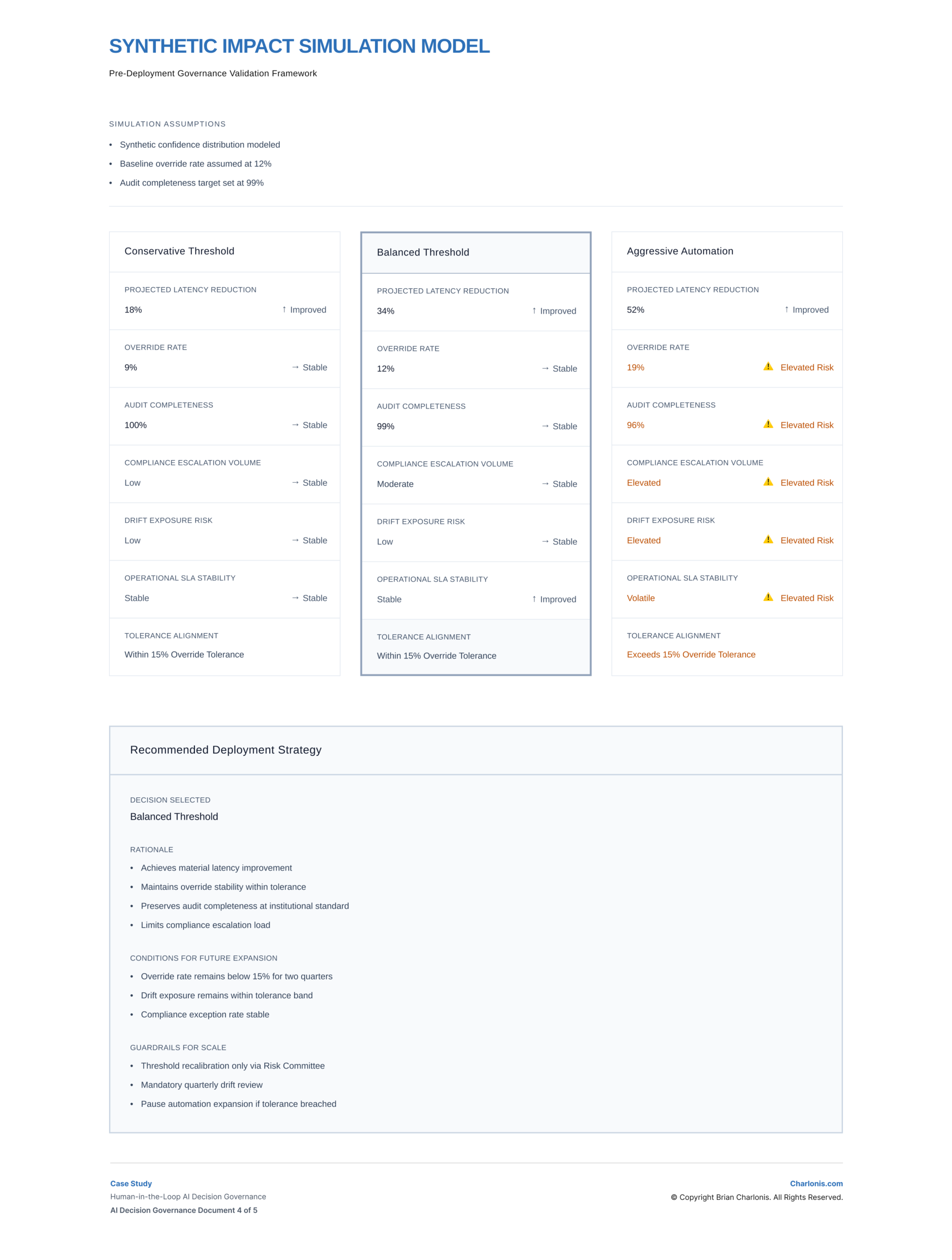

Synthetic Impact Simulation Model

Modeled three deployment scenarios to evaluate tradeoffs between efficiency, control, and compliance:

Conservative

- Latency Reduction 18%

- Override Rate 9%

- Audit Completeness 100%

Balanced

- Latency Reduction 34%

- Override Rate 12%

- Audit Completeness 99%

Aggressive

- Latency Reduction 52%

- Override Rate 19%

- Audit Completeness 96%

The aggressive scenario exceeded the 15% override tolerance and increased exposure to drift and compliance risk.

Balanced was Selected

- Material latency improvement

- Override stability within tolerance

- Audit integrity preservation

- Controlled compliance load

Scaling conditions and governance thresholds were defined prior to deployment to ensure controlled expansion.

View Figma Prototype:

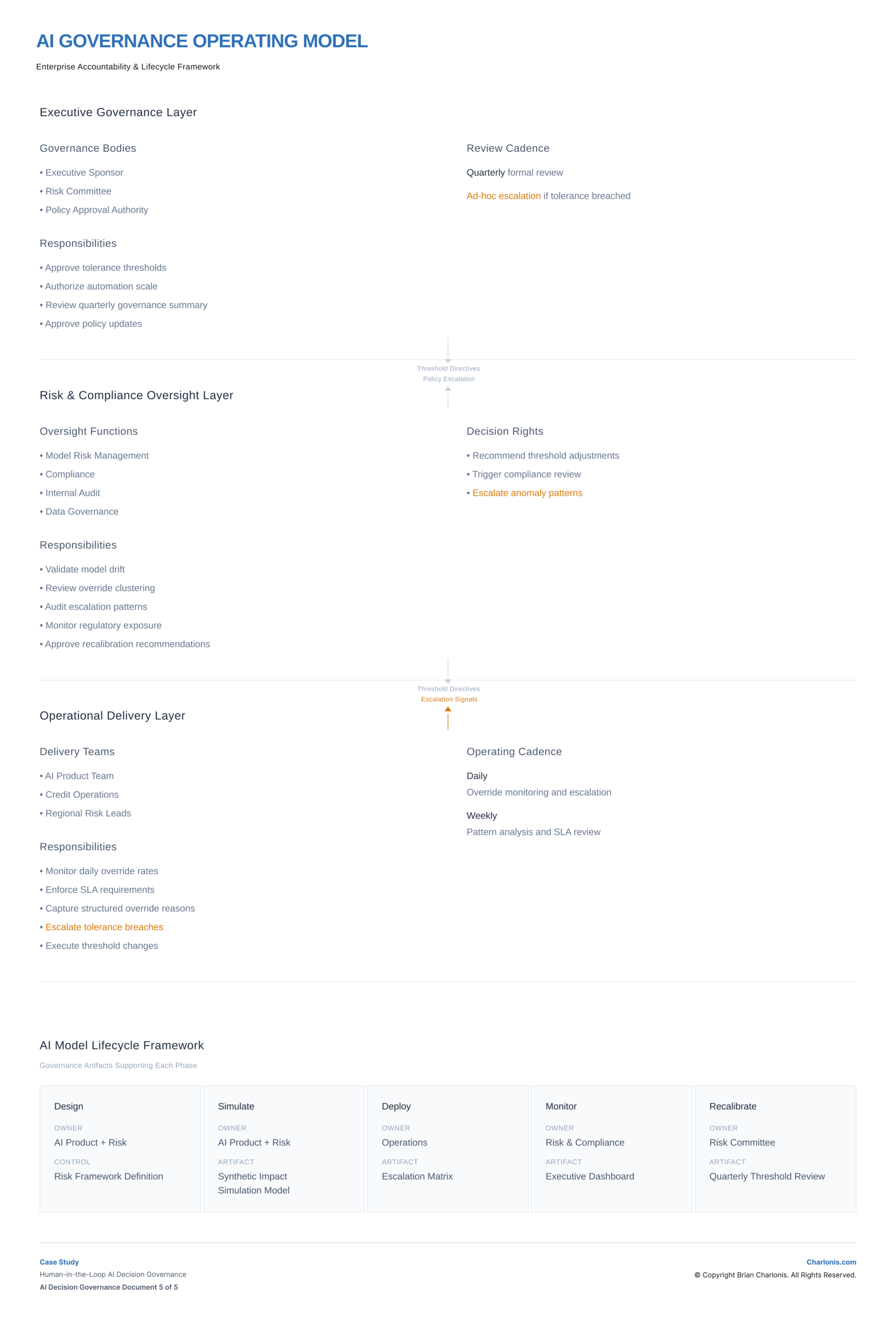

AI Governance Operating Model

Mapped accountability and control across:

- Executive Governance Layer

- Risk & Compliance Oversight

- Operational Delivery

Defined upward escalation signals and downward threshold directives, ensuring alignment between decision execution and governance control.

Design → Simulate → Deploy → Monitor → Recalibrate

Each phase was tied to governance artifacts, creating a closed-loop decision system that continuously adjusted based on performance and risk signals.

View Figma Prototype:

System Design Framing

- This system positioned experience design as a mechanism for trust, control, and accountability.

- Automation accelerated low-risk decisions, while governance structures controlled exposure, escalation, and intervention in higher-risk scenarios.

Enterprise & Experience Implication

- Human-in-the-loop systems fundamentally shape how users experience AI.

- Confidence thresholds, escalation rules, and feedback mechanisms determine when users trust automation, when they are asked to intervene, and how decisions are understood and acted upon.

- Poorly designed systems create friction, confusion, and distrust. Well-designed systems create clarity, predictability, and confidence in decision-making.

Tradeoffs & Decisions

- Prioritized controlled automation over maximum efficiency, ensuring high-risk decisions remained subject to human oversight.

- This reduced the speed and scale of automation but improved transparency, auditability, and user trust.

- Threshold calibration introduced ongoing operational complexity, requiring continuous monitoring and adjustment to balance false positives, false negatives, and review workload.

- The system improved decision consistency and reduced unmanaged risk, while introducing dependencies on governance maturity and operational discipline.

Outcomes

Established a structured decision system that improved consistency, reduced unmanaged risk, and enabled AI-driven workflows to scale within defined governance boundaries.

Impact Summary

Reduced decision latency without sacrificing regulatory accountability

Formalized override tolerance as a governance lever

Established repeatable human-in-the-loop AI deployment model

Integrated AI governance into enterprise operating structure

Success Metrics

- 34% modeled latency reduction under balanced deployment

- Override rate stabilized at 12%, within 15% tolerance

- Audit completeness maintained at 99%

- Compliance escalation volume controlled

Signals Monitored

- Confidence distribution drift

- Regional override clustering

- SLA breach patterns

- Regulatory sensitivity flags

Decision Thresholds

- Escalate if override rate exceeds 15% regionally

- Trigger compliance review on anomaly detection

- Pause automation expansion if drift tolerance breached

- Recalibrate thresholds quarterly via Risk Committee

Actions Taken

- Selected balanced automation threshold

- Formalized escalation SLAs

- Embedded tolerance thresholds into executive monitoring

- Instituted quarterly governance recalibration

Artifacts

Human-in-the-Loop Governance Blueprint

- Defined structural confidence bands, escalation logic, and tolerance levers.

- Served risk leadership and AI product alignment.

- Established architecture for automation with accountability.

Risk Tier Escalation Architecture

- Formalized decision ownership, SLA controls, and compliance triggers.

- Served operations and risk governance teams.

- Prevented uncontrolled automation drift.

Executive Governance Dashboard

- Board-level oversight instrument linking thresholds to performance signals.

- Served executive and risk committee review.

- Enabled tolerance-based monitoring.

Synthetic Impact Simulation Model

- Modeled quantified deployment tradeoffs prior to scale.

- Served executive decision-making.

- Prevented premature automation expansion.

AI Governance Operating Model

- Mapped accountability across enterprise layers and lifecycle phases.

- Served cross-functional leadership alignment.

- Embedded governance into operating structure.

Key Takeaways

AI systems require defined decision rules, not just accurate models

Confidence thresholds directly shape user experience and trust

Human-in-the-loop design balances efficiency with control

Monitoring and feedback loops are essential for sustained performance

Reflection

What I Would Do Differently

- Introduce synthetic stress-testing scenarios earlier in design

- Expand scenario modeling to include regulatory stress environments

- Formalize internal audit integration at pre-deployment stage

AI Opportunities

- Real-time drift anomaly detection using adaptive monitoring frameworks

- Confidence recalibration modeling using probabilistic validation approaches

- Structured explainability overlays integrated into dashboard layer

Supporting AI Professional Specializations

University of Pennsylvania

AI for Business Specialization

Built foundational knowledge of AI applications across marketing, finance, and people management, with emphasis on AI strategy and governance for business leaders.

IBM

Generative AI for Executives & Business Leaders Specialization

Developed a strategic understanding of generative AI, including foundational concepts, integration strategies, and business use cases for practical executive decision-making.

Vanderbilt University

Generative AI Strategic Leader Specialization

Learned advanced generative AI concepts, including deep research, prompt engineering, and agentic AI, with a focus on strategic leadership and decision-making.

Web3 Opportunities

- Immutable override logging using blockchain-based audit trails

- Smart contract-enforced compliance triggers for high-risk tier escalation

Supporting Web3 Professional Specializations

Duke University

Decentralized Finance (DeFi): The Future of Finance Specialization

Gained expertise in DeFi infrastructure, primitives, opportunities, and risks, enabling evaluation and strategy for decentralized financial systems.

INSEAD

Blockchain Revolution Specialization

Explored blockchain technologies and applications, focusing on transactions, business opportunities, and strategic analysis for enterprise adoption.

University of Pennsylvania

FinTech: Foundations & Applications of Financial Technology Specialization

Developed a comprehensive understanding of fintech ecosystems, including payments, digital currencies, lending, and the application of AI, InsurTech, and real estate technology within regulated financial environments.

Recommended

If you liked this case study, you may also be interested in these…

CASE STUDY

INSTITUTIONAL GOVERNANCE

Enterprise Governance & Policy Architecture for AI Systems

Institutionalized an enterprise AI charter, risk taxonomy, capital gating model, and vendor governance framework that formalized board-level oversight and capital discipline before further AI scale.

AI

Product Strategy

CASE STUDY

AI PRODUCT STRATEGY

Enterprise Risk & Compliance AI Capability Roadmap

Established a governance-aligned AI capability roadmap, prioritization model, and Build-vs-Buy framework that enabled disciplined AI investment and structured platform evolution.

AI

Product Strategy

CASE STUDY

Modernizing Global Cash & Treasury Management

Led definition of a global treasury decision system adopted by executives as the modernization direction, embedding compliance, fraud validation, and automation into workflows to improve decision confidence, reduce risk, and support scalable global operations.

CX

Product Strategy

CASE STUDY

TOKENIZED FINANCIAL MARKETS

Modernizing Private Credit Infrastructure Through Governed Tokenization

Designed a governance-first tokenization operating model that formalized asset eligibility, capital gating, escalation routing, and executive oversight before pilot capital deployment.

AI

Product Strategy

Web3

Governance Before Scale.

AI success in finance depends on escalation logic, override visibility, and accountability architecture. If you are modernizing financial decision systems, let’s connect to discuss governance-centered AI transformation.